We have data flowing through from source websites > json > database > website 🎉

Architecture

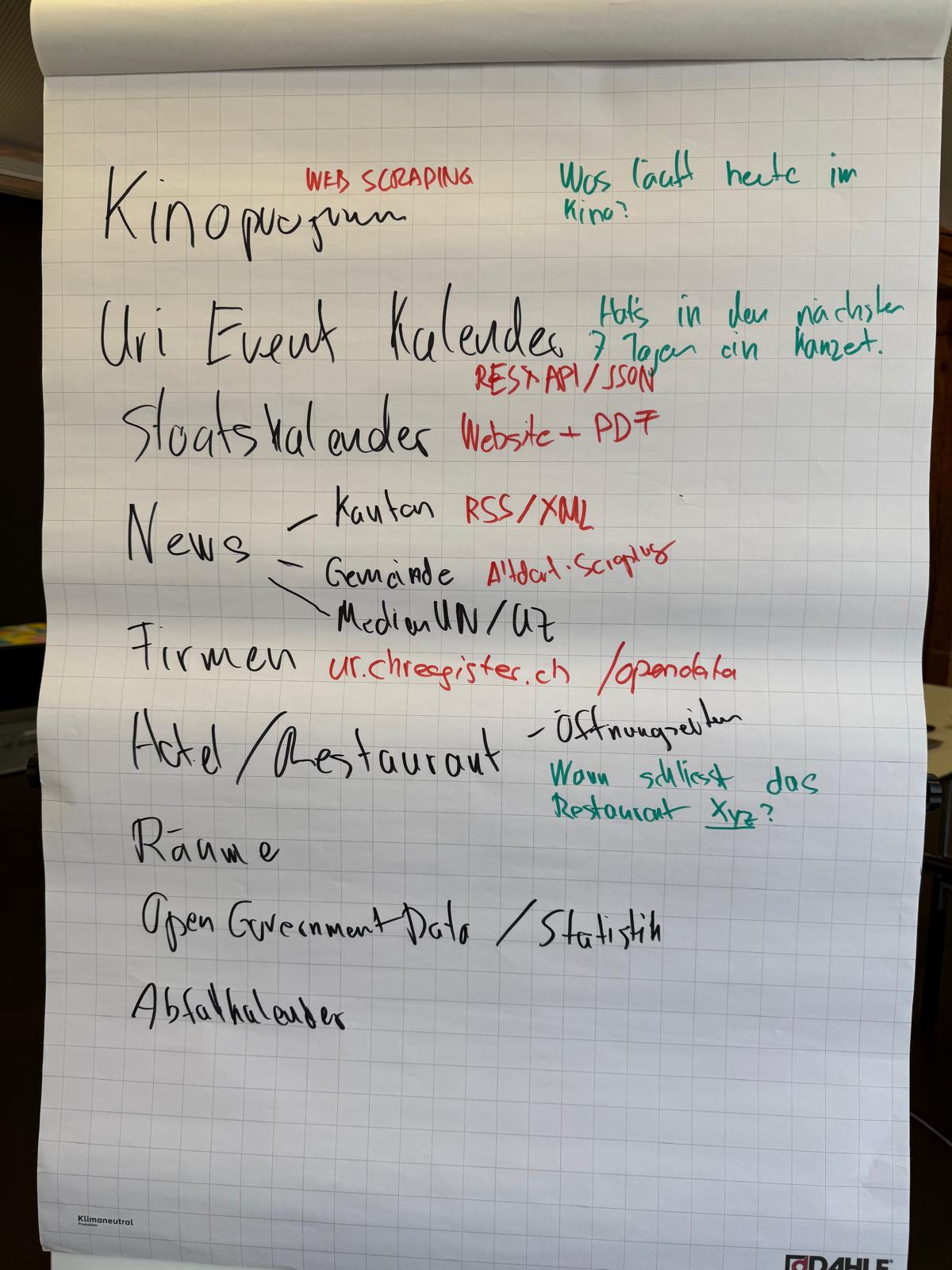

6 Local Sites → Python Scrapers → events.json → PostgreSQL → Flask API → Solid.js Frontend

How Far You've Come

Done

- 6 working scrapers (Urner Wochenblatt, KBU, Musikschule Uri, Schulen Altdorf, Gemeinde Altdorf, Gemeinde Andermatt) — mix of custom HTML parsers and RSS

- Central orchestrator (scraping/scraping.py) with deduplication by title+date+time

- 400+ scraped events in events/events.json

- PostgreSQL schema with events + sources tables, UUID keys, foreign keys

- Data ingestion pipeline (db/parse_json.py) that upserts sources and inserts events

- Flask REST API (api/app.py) — GET /api/events?date=YYYY-MM-DD and GET /api/sources, CORS enabled

- Frontend skeleton (frontend/src/) — Solid.js + Tailwind, with Header and Card components styled

We're setting up Digital Cluster Uri as an issuer for Swiyu credentials. The onboarding process requires approval step over email that may take a while :(

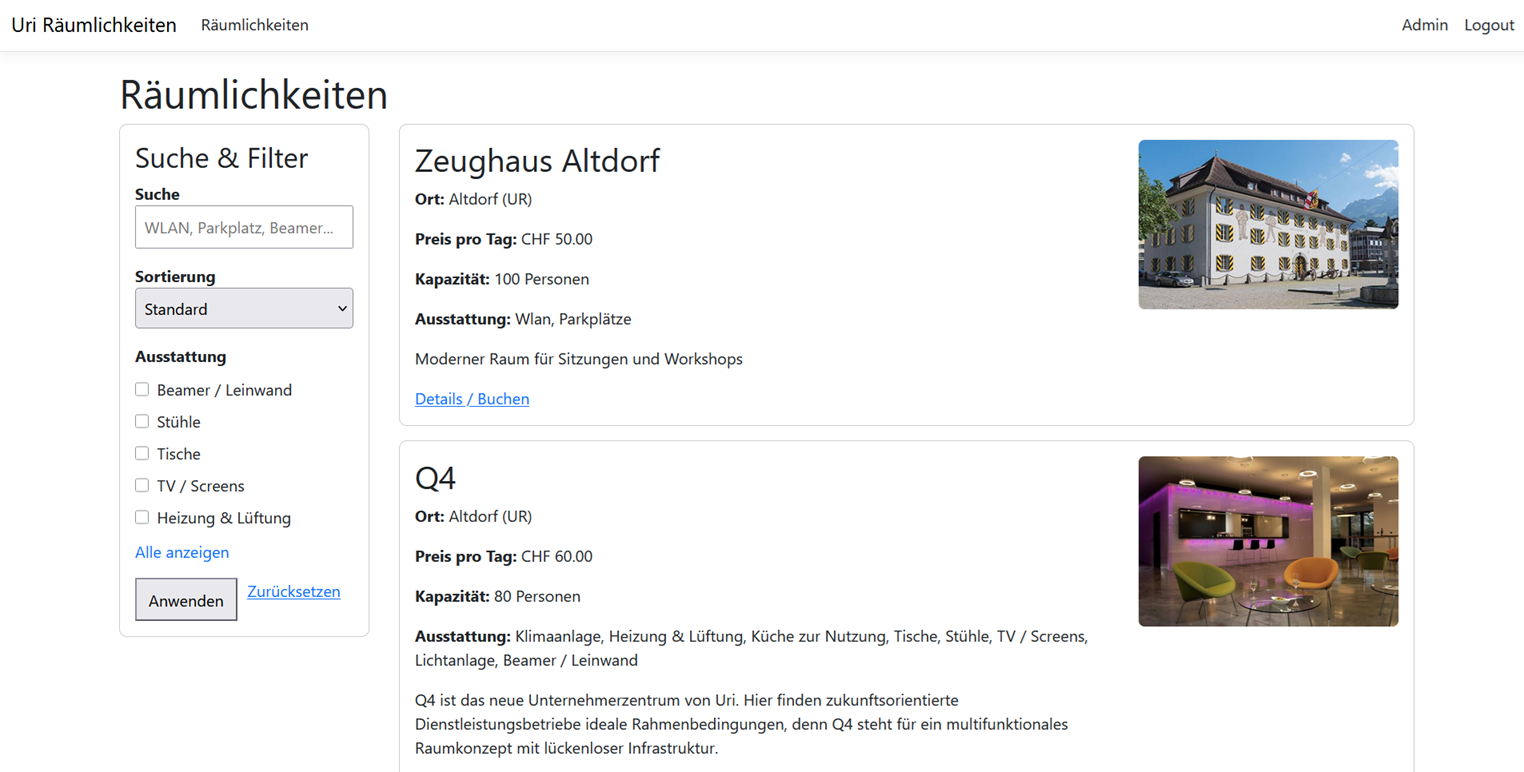

Eine erste Abbildung der Webseite

We are going to test https://github.com/nextcloud/helm

https://kubernetes.build/ will be our development enviroment.

@janikvonrotz@fosstodon.org and @Greenheart@fosstodon.org are working on the project.

We've broken down the work into 3 groups: Said is building the web front end, Simon is working on the data scheme and duplication logic, Flavio and Kait are writing HTML scraping scripts to get event into in a specified JSON format.

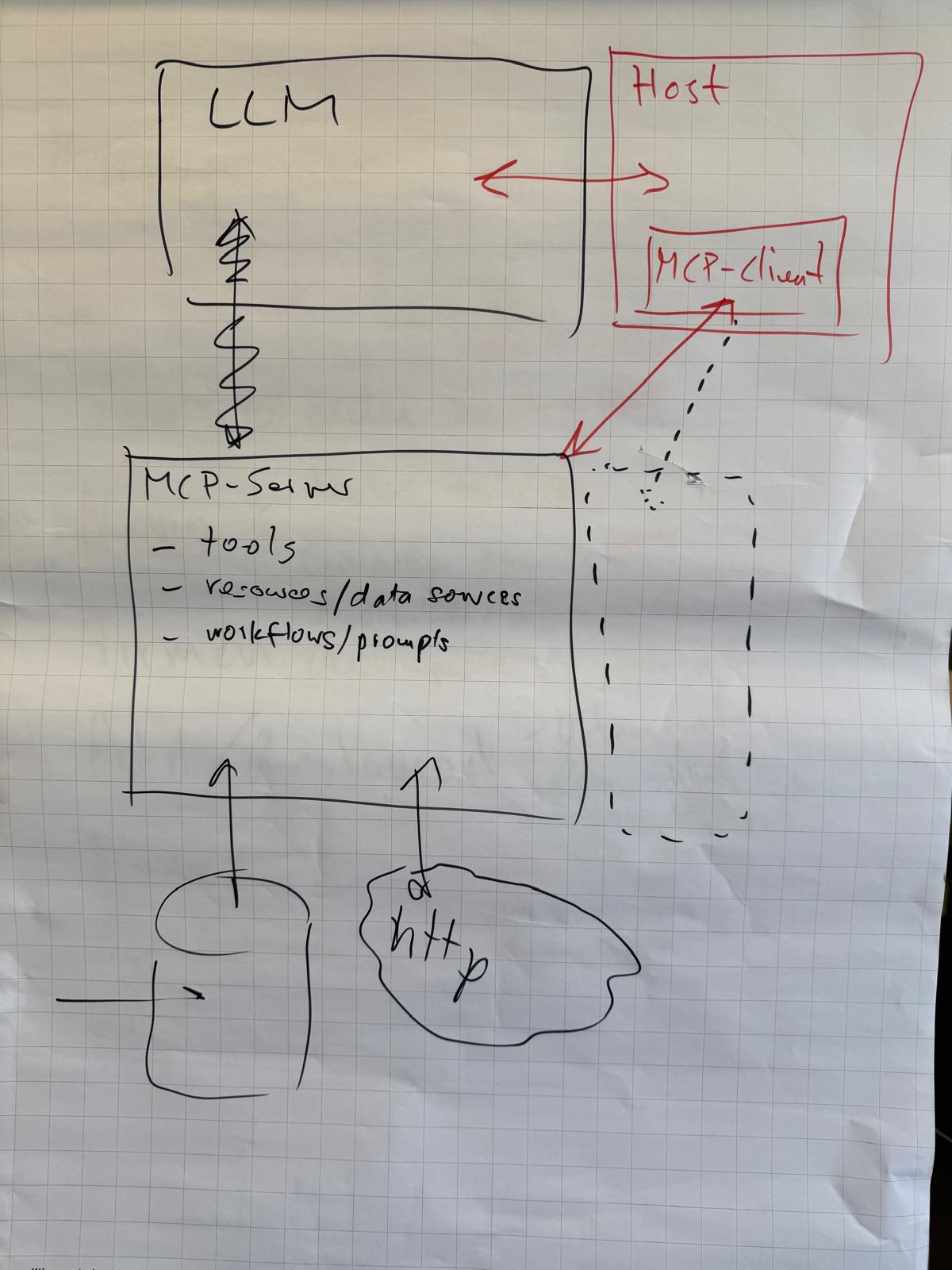

Das Konzept für die Anbindung von LLMs / Chats an MCP Server ist am entstehen. :-)

* dribs n. pl.: in small amounts, a few at a time